Kubernetes today is the foremost container orchestration platform in the cloud-native ecosystem. It’s an open source system with rich features backed by a large and growing community. Its self-healing qualities is one of its most prominent qualities. Functionally, this is made possible by the Kubernetes controllers, of which the deployment controller is one of the most widely used. The deployment controller runs an infinite watch loop to ensure that the current state matches the desired state for deployment objects running in the cluster.

Deployments are declarative objects that lets you describe the lifecycle of a particular application, its container configurations, the number of pod replicas, and how it should be updated when a new version is rolled out. Despite their declarative nature, like other Kubernetes resources, they can be managed imperatively through the command line.

Understanding how deployments work is an integral part of the application lifecycle in Kubernetes. In this post, you’ll learn what deployments are, how they function, what their main features are, and how to operate them.

Understanding Kubernetes Deployments

Deployments in Kubernetes are a special type of resource categorically known as controllers. Controllers ensure that the system’s live (or actual) state matches the desired state as described by requests sent to the Kubernetes API server. There are multiple controllers, and each one is typically responsible for a single type of resource. Controllers continuously watch the API server for events that are associated with the specified resource type that they’re responsible for managing. Once a change is detected, the controller performs the work required to move the current state of the underlying resource to its new desired state.

Controllers in Kubernetes can operate based on a powerful layered architecture that lets them delegate work to other controllers. Different resources working together like this can provide an enhanced and cohesive set of functions.

A good demonstration of delegation is how the pod, ReplicaSet, and deployment resources work together in the container orchestration process. Pods are ephemeral wrappers responsible for running one or more containers that have resource requests for a worker node in your cluster. Their sole purpose is to run container instances of an application. However, their ephemeral nature doesn’t make them optimal for production workloads. As such, the ReplicaSet controller is used to achieve resilience and performance by managing the state of multiple pod instances, while delegating the responsibility of direct container orchestration to the pod resource. ReplicaSets are, in turn, managed by deployments. Deployments are resources that operate at a higher level than ReplicaSets and pods. As the name indicates, deployments are used to deploy and update applications in a declarative manner.

Although the container orchestration process also involves ReplicaSets and pods, deployments operate at the highest level. Here are some use cases for deployments in Kubernetes:

- Scaling: Deployments let you automatically scale your application instances (better known as replicas) to match the load they’re experiencing.

- Rollouts: Deployments let you move from one version of your application to the next in an automated way. There are different strategies that can be applied, and deployments lets you configure how this process is carried out.

- Rollbacks: Deployments let you roll back to a previous application version in the case of a software error in a specific version. They retain the revision history within a certain limit so that you can easily roll back to the desired version.

Creating Kubernetes Deployments

You can either create a deployment using a procedural (or imperative) approach with kubectl commands in the terminal or declaratively using configuration files, typically known as manifests. The imperative approach is typically taken for experimentation or for managing certain configurations dynamically. The declarative approach, however, is carried out by using manifest files that define the desired state.

In practice, you can combine them. For example, you can use a manifest file to define some aspects of the deployment’s desired state such as the rollout process, whereas you manage the replicas imperatively because you want to dynamically change this value based on traffic and resource usage of its pods in the cluster.

When you create a Kubernetes deployment object, the underlying ReplicaSets and pods will be created under the hood, and each object will fulfil its respective role in the container orchestration lifecycle. So when using deployments, the ReplicaSets of the deployment object creates and manages the relevant pods, not the deployment. As such, deployments provide a great deal of abstraction and simplify the process of scaling and managing an application by defining the desired state. Deployment manifest files include a replicas field to control or delegate the number of ReplicaSets, as well as a template field to specify the pod configurations.

Below is an example of a deployment manifest file in YAML:

apiVersion: apps/v1beta1

kind: Deployment

metadata:

name: nodejs-application

spec:

replicas: 3

template:

metadata:

name: nodejs-application

labels:

app: nodejs-application

spec:

containers:

- image: my-docker-hub-account/nodejs-application:1.0.0

name: nodejs-application

To create this resource in your cluster, simply save it as a YAML configuration file (i.e. deployment-manifest.yaml) and deploy it to your cluster with the following command:

$ kubectl apply -f <manifest-file>.yaml

The method above is the preferred approach but you can also create a deployment using the command line. As explained before, this is a procedural approach:

$ kubectl create deployment nodejs-application --image=my-docker-hub-account/nodejs-application:1.0.0 –replicas=3

Another method for creating deployments is through the Kubernetes Dashboard. It’s a web application that provides a rich UI for managing the lifecycle of your cluster and its workloads. Under the Workloads section, operators can use the deploy wizard to create a deployment resource. To get started with the Kubernetes Dashboard in your cluster, you can deploy it with this command:

$ kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.6.1/aio/deploy/recommended.yaml

You can then proceed to configure the access by following the steps outlined here.

Management and Scaling of Deployments

This section highlights some of the main operations that can be performed by deployments and how to manage them.

Scaling

Software applications in production constantly experience variations in their network traffic load. When traffic is high, maintaining a static number of container instances can easily result in downgraded performance for end users or clients. Inversely, when traffic is low, having more container instances than needed can negatively impact efficient cluster resource usage.

You can use deployments to scale an application up or down by modifying the replicas field either imperatively using the command line, or declaratively using a manifest file. This operation will delegate the request to the appropriate ReplicaSet.

To scale an application, execute the following command:

$ kubectl scale deployments <deployment-name> --replicas=3

In practice, it’s a good approach to manage replicas imperatively through the use of the Horizontal Pod Autoscaler (HPA). The HPA watches pod metrics and scales out the pods in your cluster based on resource configurations and thresholds, i.e. average resource utilization. It’s defined like any other resource in the Kubernetes API and has a one-to-one relationship with a specific deployment. The HPA relies on metrics to inform it of pod resource usage. If more pod replicas are required, it modifies the deployment replica specification. The deployment will in turn act on the new desired state that was updated by the HPA.

Rollbacks and Rollouts

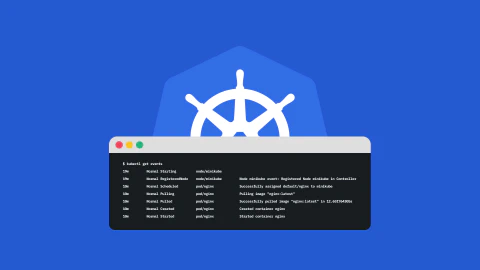

Deployments lets you roll back to a previous application version because they keep a revision history of all the rollouts. This revision history is attached to the deployment object and is specifically stored in the underlying ReplicaSets. When a new application version is rolled out, the previous ReplicaSets aren’t deleted but are stored in the etcd datastore. This is what makes the rollback functionality possible.

You can follow one of two strategies when you need to deploy a new version of your application: the Recreate strategy or the RollingUpdate strategy.

The Recreate strategy is fast and simple. It immediately terminates the running pods upon detection of a new container image specification in the deployment manifest. A drawback with this strategy is that it can result in downtime of your application because of the immediate deletion of all replicas.

The RollingUpdate strategy can be considered a more graceful approach because it ensures that there are always pods running to handle application traffic while the new version is being rolled out. It is slower but safer if the priority is to avoid downtime. It will incrementally shutdown the old version while spinning up pods based on the new version. You can also apply complex approaches to how the RollingUpdate works to incorporate other strategies (i.e. canary, best-effort controlled rollout, ramped slow rollout)

You can view the deployment’s rollout history with the following command:

$ kubectl rollout history deployment <deployment-name>

To prevent clutter, there’s a limit on the number of old ReplicaSets you can keep. This limit is specified using the revisionHistoryLimit field. The default value is two. Anything beyond that limit will be automatically deleted. To roll back to a previous deployment version, you can use kubectl rollout undo command <deployment-name> --to-revision=1.

If you roll back to a previous deployment revision, the deployment will reuse the template and update its revision number so that it reflects the latest revision. For example, if there are two versions of a deployment, the first version is revision 1 while the current version is revision 2. If you action a rollback to the first version (revision 1), it will become revision 3 when rolled back.

Liveness and Readiness Probes

Liveness and readiness probes give Kubernetes operators the ability to continuously monitor the health of the containers running in the cluster.

Liveness Probes

Containers and pods are ephemeral (or short-lived) by nature and eventually come to an end of their lifecycle. Deployments can detect when pods reach the end of their lifecycle and will continuously reconcile the number of running replicas according to the desired state by starting up the relevant number of pods.

However, there are scenarios where pods and containers can appear to be running while the applications themselves are in a failed or broken state. As you’d expect, this can introduce instability and some unpredictability for workloads running in a Kubernetes environment.

Liveness probes continuously monitor the applications to detect any failures and seek to remediate them by restarting the containers according to the restartPolicy. Liveness probes give the kubelet granular insight into the operational state of the application to improve both the resilience and availability of your workloads.

Readiness Probes

Containers in Kubernetes often fit within large distributed systems that rely heavily on intercommunication. Readiness probes add robustness to this network activity by letting you build in checks to ensure that the containers in a pod are ready to receive traffic from other sources. These checks are executed by the kubelet during the lifecycle of the pod.

If you use readiness probes with liveness probes, the readiness probes can be mainly used to monitor the start-up activity of your pods while the liveness probes are dedicated to monitoring the ongoing health of your applications.

Deleting Deployments

Deleting a deployment object in Kubernetes has a cascading effect on the underlying ReplicaSets and pods, ie, they will also be removed unless the --cascade=orphan flag is specified. You can specify the deletion cascading strategy by using one of the three options: “background”, “orphan”, or “foreground”, of which “background” is the default strategy.

By default, the respective resources (pod replicas) will be deleted gracefully over a period of thirty seconds. You can specify a different period using the grace-period flag. If this grace period is exceeded, Kubernetes will automatically force delete the resources. Alternatively, you can force delete the pods from the onset by specifying the --force flag to true. This will immediately delete the resources and skip the normal grace period.

To delete the deployment object you created, simply run the following command:

$ kubectl delete -f <manifest-file>.yaml

If you created it imperatively, execute this command:

$ kubectl delete deployments <deployment-name>

Conclusion

Deployments are one of the most commonly used resources when deploying container workloads to Kubernetes. As such, it’s important for software teams to understand how they work and where they fit into the container orchestration lifecycle in Kubernetes. Understanding how they function will equip you to make optimal and appropriate use of them for various scenarios pertaining to application releases, rollbacks and autoscaling. This post covered what deployments are, what they are used for, and how to manage them.